I know

After playing around a lot in that engine, you notce that apart from the netcode nearly everything else is either a crappy hack or completely insufficient for anything except half life or counter strike like shooter

Also note: while the netcode articles are a convenient pointer (and probably a prerequisite) to anyone wanting to understand the principles underlying SimEthereal, there is quite a bit that is different about my approach.

For one thing, I wanted to be able to efficiently support a lot of objects… so something more clever than ‘blast all the state’ was needed. Then once you start sending only deltas over UDP then you need to get a bit more complicated with a bit of transient reliability. I’d like to think my solution is pretty clever but I’m sure it’s been done before.

I keep trying to think of a diagram that would easily explain the double-ack baselining that I do. If come up with one then you guys will be the first to know. I’ve had to reteach it to myself a few times now so having something to point to would be good.

Which, to be fair, was all it was originally written to be.

Sure

Actually my own networking is pretty similar, but instead of udp and homegrown I use tcp for reliable slow traffice.

(It really does not matter if a ship +500meters away is slightly offset) and udp only for the nearby stuff that can actually change.

So far I would say that for everything not really fast, tcp + a lot of interpolation seems adequate and saves quite a bit of problems.

The problem I’ve had with TCP in the past is that a hiccup can cause the data to backup for 1-2 seconds. So you get this 2 second pause and then suddenly a blast of messages.

I use UDP to send the deltas from a known good baseline that the client and server have agreed upon. The client acknowledges the messages that it gets (and keeps acknowledging). When the server receives one of these ack messages, it moves the baseline up to where the client’s messages say it should be and then starts sending a double-ack for those messages. That way the client knows that the message receive is based on a new baseline.

This is different than a true reliable protocol because stale data is still discarded and overwritten. A reliable protocol (like TCP) will deliver everything even when it’s crusty and old and no longer relevant.

The protocol also means that for objects not moving, that object’s individual state update is only 28 bits or something. (zone ID + network ID plus a few bits indicating that the data has not changed.)

Rotation and position are packed into bit streams…with the resolution of position greatly reduced since all positions are relative to the zone. For example, my SimEtheral example packs a Vector3f position into three 16 bit values. Quaternions are packed into 4 12 bit values. So 48 bits each for position and rotation plus another bit each for ‘no change’ or not. So even a normal object update takes only about 124 bits… ie: 16 bytes with four bits to spare. Since everything is packed into a bit stream (which by the way are completely usable outside of SimEthereal) those four bits add up also. After 31 objects, I can fit a 32nd one in for free, basically.

But that’s why I can fit so many object updates in a small UDP packet. I think by default I clamp it at ~1400 to try and stay under a typical MTU size (so the UDP packets are less likely to be split in the network stack). In theory that lets me send 90 object updates in a 1500 byte message… there may be some additional frame protocol stuff that I’m forgetting.

If they can fit in the message, then I try to pack three frames at a time (this actually depends on how you call the network code but that’s the default). But I will defer a frame’s state to a new message or even split a frame to try to keep it under the current estimated MTU.

Edit: bitstream classes if anyone is curious:

Ability to pack Vector3f/Quaternions/etc. easily into bitstreams is part of my SimMath package:

I know, but that seems to be solved if you plan to release in like 3+ years

(linux supports a similar system since quite a while and most likley the rfc will become specification now that a large percentage supports it without knowing  )

)

I actually do not send any data for non changing objects (wich is probably good for a space game with many static parts.

Eg all space ship parts are usually not changing in relation to its parent (i send parenting information in the protocol)

The compression I have not yet really implemented for that positions and the rotations, I did expirience with 1byte float precision tho, giving roughly <2degrees accuracy, so a quaternion is only 4 bytes.

I had some really bad burns with udp, in the manner that some network devices between me and a friend actually disposed all except the minimum required udp size because it’s allowed by the spcification '

I will mostly likely use the last link and kinda apply that to mine layer directly

So do I, by the way… but only if it’s changed. ![]()

Edit: and note: the idea is that truly static data is handled normally like any other entity data.

Edit2: also note, I cut-pasted some of the above response over to the other thread that’s actually on topic. ![]()

It seems that you could add support for different priority in your network stock tho

Eg a death notification is more important than a slight movement change of another object, so i kinda allow my client to see states that are partly uptodate and partly in the past, depending on distance this is increased for most component types.

Actually every component can has a special behaviour based on what I want to do, eg names are only transmitted low priorty but always, positions are low priority for smaller and furhter changes, and switch to udp blast mode for very near objects ect.

Ill try and keep this short. Please take everything i say with a grain of salt. I’m not a programmer by profession, I write SQL and work on databases for a living.

I ended up leaving jmonkey because back in Feb 15 some people thought Oracle was going to deprecate java, I felt i might as well learn something else. Funny side note, I checked in on Jmonkey when the site was down I thought it got abandoned.

I poked around with Unity, UE4, BGE, and lumberyard. 3 out of the 4 lack decent linux support. BGE LY and UE4(when i used it prior to going free) had spotty documentation, had somethings locked behind pay walls, or the thing i wanted to use like Paper2d(ue4) was not being developed/supported. Just a lot of non technical reasons for no longer wanting to use these. Royalties were another concern. Portability was a concern too. Lots of things can run java but can a toaster run UE4 lumberyard or unity ? Im fairly sure the answer to that is no.

I tried Godot, I did not like the overall structure of the engine, and how scenes were to be managed.

Overall I like to keep my projects slim, and just something to mess around with. UE4 needs huge updates, lumberyard is 40+g unpacked, unity is getting more and more bloated too. Godot is nice but is not the tool for the proverbial job i want to accomplish.

This is getting fairly lengthy, so im going to end this here. I’ll spare people details and the obscure stuff

If something like that would happen for real, people would likely going to deprecate oracle too…

There are already free implementations of jdk (OpenJDK) that aren’t in control of oracle, i guess… but since the google vs oracle thing, i’m not sure anymore…

I am using @pspeed’s network layer on my game right now … It’s a space game, there are currently over 2000 ships in view-able sector space … over 1500 concurrent users on one server. Total bandwidth per connection is 10-11 Kbps. I have stress tested it in a real world environment and it is very stable. The server has been running for over 2 months without a reboot. I only reboot the server to install game updates.

Actually I asked Failfarm but it is my fail I didn’t quote him xD. We even had conversation about it some weeks ago.

Anyway thank you

rant

It’s garbage for shooters too. Recently there was an update, they made some minor changes to crouching animations - and guess what happened? Somehow it added a bug where if one player crouches while a different player is scoped with a specific gun (the auto sniper) - the player with the auto sniper will be able to run max speed while scoped in.

Spaghetti code.

I could go on all day honestly. Inaccuracy is CSGO is a function of your velocity, but in this case it only looks x/z, not vertical movement. Result? People have been falling off of parts of the map and firing in mid air with 100% accuracy. Considering the fall speed you reach, they will in many cases shoot you before you’ve even seen them on your screen.

One of the biggest problems of all - you cannot shoot each other at the same time. Literally you can fire an entire clip into someone and not get any hits based on the fact that they are doing the same thing. What if you both shoot in the same update? Hardly farfetched at 64 updates per second.

Aim punch - your aim goes mad when you get shot. Again this is a massive problem with the servers-client system and should be removed. If you record yourself playing you can shoot 100% accurately at a guy, but then look at the demo and see that in the servers version you had already been shot and so your aim has been “punched” up into the air.

They “rewind” players positions to see if when you clicked they where in your aim regardless of them having moved away on their screen, so this aim punch should have a similar system, as it is it’s garbage.

It was also recently found that the code for accuracy is so messed up that you actually are more accurate while crouched and moving than when standing still.

Sound for grenades is missing in certain parts of certain maps. Literally they are seemingly random spots.

I got banned from competitive matchmaking for a time because a CSGO server crashed while it was running a game and their system assumed that I abandoned the match. ???

Animations are not synced properly, and so players can kill you when you can’t see them. And this isnt a latency problem, it literally will just not let you see them because 3rd person crouch and 1st person crouch is just broken.

Their attempt to stop wall hacking is a disaster, and you can get killed by people you can’t see because the server thinks you shouldn’t be able to see them from a specific point despite there being a tight angle which allows you to.

All of this on a game that uses lightmaps and has mediocre graphics while STILL having major FPS problems on even the best systems. I get better frame rates on GTAV than this thing. Some spots in maps will drops me down from 160+ to < 40. This is a widely reported and ignored issue.

I used to play this game competitively and it’s a complete joke. I also used to do lots of modding stuff with source before I took up programming and it’s a nightmare. On the bright side it helped convince me to start programming since I figured there must be something better out there. (JME  )

)

/rant

Doesn’t remember how many, but there was plenty, and there wasn’t any performance hit on the frame rate. I needed to raised it 100 times to get any noticeable frame drop. Even then it was playable.

I’m working on a shader node editor to get in touch with the shader node system. At this time it can only open a material and display its content. Later on I’ll make the design more readable. But I am unsure how far I will develop this project because big shaders seem to produce very confusing graphs.

This is how the UnshadedNodes.j3md looks like:

IIRC The jMonkey SDK provides a shader noder editor too. I would be happy if someone using it could post an image of the same material to give me an impression of it.

Edit: Changed image to show new design. This one is much better.

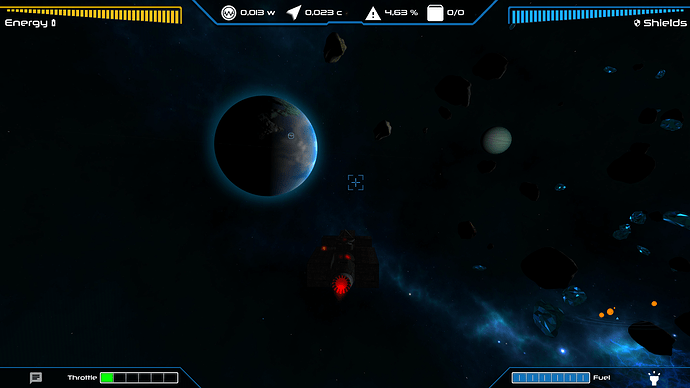

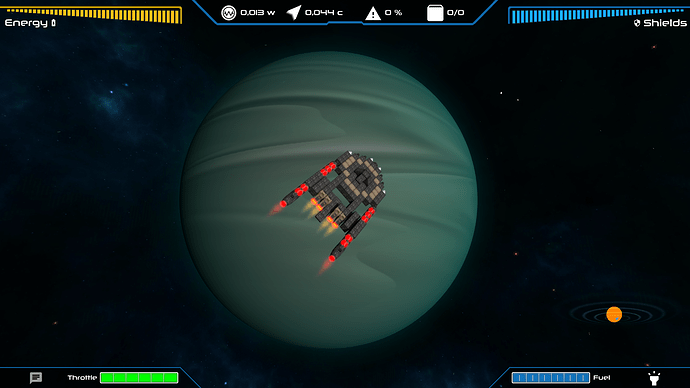

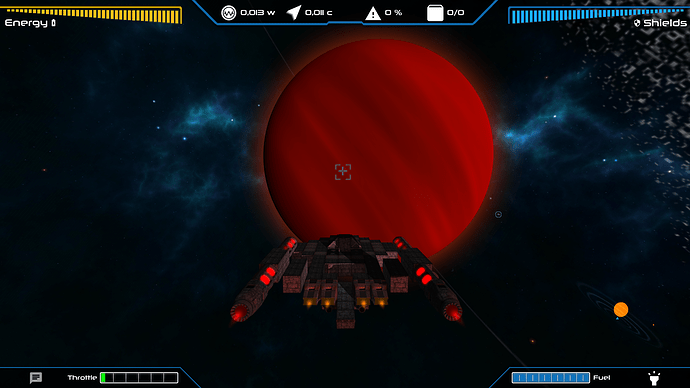

I updated the game hud to include the new engine throttle (and fuel indicator for when fuel tanks are added).

Also made some changes to the planet shader, it now adds a color to the edge of the planet by doing a dot product between the view vector and face normals.

Not exactly great in some cases…

And no effect at all in some other cases.

Oh and the game got into PAX West, as a part of Indie Megabooth (the minibooth subdivision to be exact)! Yay!

Shouldn’t it be a dot product between light vector and face normals?

The light vector? Wouldn’t that put the boosted edge on the part where the shadow meets the lighted area? I don’t think that’s what I’m going for.

It’s supposed to be the effect you get when you look at more layers of atmosphere, so at the view’s edges I’d say.

Well, it would certainly be cool if the sun would light the atmosphere.

So …  … The dot product of the light vector and the inverse of the atmosphere normal would be 1.0, if the sun is right above the atmosphere. (I assume that the atmosphere is a sphere around the planet.) You could keep the intensity of the atmosphere very high until the dot prouct would reach values less than -0.1, or something like that.

… The dot product of the light vector and the inverse of the atmosphere normal would be 1.0, if the sun is right above the atmosphere. (I assume that the atmosphere is a sphere around the planet.) You could keep the intensity of the atmosphere very high until the dot prouct would reach values less than -0.1, or something like that.

Actually it’s just a layer of cloud texture stacked above the diffuse that moves with g_Time now. I used to have a second sphere with just the clouds and I just got rid of it to reduce the amount of geometries. It had no impact on performance lol.

I’m starting to think that these modern gpus can render a gigantic amount of polygons but can’t operate a sine function in the frag shader without dying.

I’ll try doing that and applying it to the cloud layer and see what happens. Why is the g_LightDirection a vec4? A direction should be a regular vector, no?