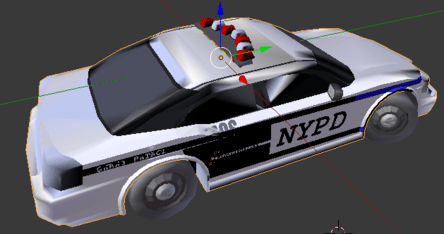

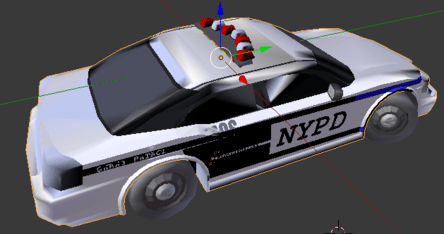

I am currently playing around with the ShapeNet dataset for some research and have noticed that many of the objects do not render correctly in Blender (an issue that has been brought up on the ShapeNet forums before). For example, the object found at ShapeNetCore/02958343/114b662c64caba81bb07f8c2248e54bc (a “NYPD Highway Patrol Dodge Charger”) looks like this in Blender:

but, in the ShapeNet Viewer (which uses jMonkeyEngine as the rendering engine), it looks fine (see my GitHub issue here for the picture). As was mentioned in the forum post, the models that do not render properly in Blender have a “bad topology”, e.g., they include “two-sided” faces and “flipped” faces as mentioned. So, my question is, HOW does jMonkeyEngine properly render these objects? I would like to be able to replicate its process so that I can use the models with different rendering pipelines.

didnt knew it use JME. but, please note Blender render is under f12 shortcut, not preview that is simplified to work faster.

in blender you have “Solid” “Textured” “Material” “Rendered”. Where “Rendered” can work in both “Blender render” or “Cycles Render” (similar to JME materials system when you will for example compare principled bsdr and JME pbrLighting material). Game rendering is not same as film rendering too. even if both use game render, it can be different due to different shaders.

So you cant really expect same render result anyway.

Not sure why text is partially hidden, but looks like some location fight issue.

But the main issue is that you use Texture or Material preview and you expect it to look the same.

About flipped faces, you just need switch face normals(face directions - you can try select all in edit mode and ctrl-N), because it seems it imported incorrectly for some reason. You also need provide more information about how you import it to JME or to blender.

also one more thing. i think its more Blender related question.

Thanks for your reply. In this case, it looks like the difference has something to do with back-face culling. More details can be found in my comment on the GitHub issue.

its not back-face cull issue, because it work as it should.

It looks like I fixed the issue by [adding back-face culling.

you disabled culling there, cull = hide, disabled = no hide

and its not proper fix for your model. your “fix” is like hiding hole in car with some foil.

like i said, you need fix face normals. when face normal is directed inside mode, then back-face culling will just hide this face (or darken it in blender if no face cull).

no culling should be used only if your model really need it.

you disabled culling there, cull = hide, disabled = no hide

You are looking at the wrong bit of code. In scene.py, self.CULL_FACES is set to True by default and I can toggle it on and off in my tool by pressing the F key.

no culling should be used only if your model really need it.

Indeed, I have (higher quality) models (like a backpack with straps) that need back-face culling off, which is why I left it off in the paper_code repository.

like i said, you need fix face normals. when face normal is directed inside mode, then back-face culling will just hide this face (or darken it in blender if no face cull).

The issue is that the ShapeNet models have duplicate faces (I’ve verified this myself), but they seem to have been created in a software that has back-face calling on by default so the weird artifacts weren’t noticeable (even though back-face culling shouldn’t have to be on for an object to render properly).

dupplicate faces explain a lot. it might create a lot of artifacts, specially if normal for dupplicated faces were broken.

so seems like ShapeNet have done something(face culling) wrong way.