I want that code !!!

Thrown a bit of physics in the mix. I separated the trunks from the leaves so I only collide with the trunks - which makes life a lot easier for bullet as well as better for the game. Putting a character in the game gave me a scale to work with - before then everything was kind of a mish-mash of sizes. I opted for a single dedicated thread for physics generation because the terrain generation pool can become bogged down at times and we really want physics meshes immediately, not after a tree 500m away. Even a priority list didn’t seem to help much.

Some LOD on the terrain and batching some static objects will chop the object-count/vert-count down. I guess vehicles, weapons and a the sky/day-night cycle are the next things to add. Slowly creeping up to AI characters to shoot/run over/ragdoll and have a bit of fun killing stuff ![]()

The noise generator I use is already on github. A lot of the code still changes quite frequently - though a lot less lately. I’ll take a look over the next few days to see how to start doing it in stages. It would all need a demo, too. It’s not as easy as one might think, but I have written it all as modular packages (you don’t need to use all my stuff - you can just use some of it).

Looks super nice. Just need some monsters to hunt.

One thing I noticed is that sometimes the grass ‘lines’ are really apparent, like they all line up on the grid or whatever. (I see this in some pro games, too, though.) Made me wonder if the individual blade clumps need some noise offset or something. That’s kind of just a nit-picky thing, though.

WIP lipsync ![]()

based on topic https://hub.jmonkeyengine.org/t/lipsync-sound-amplitude-data-listener/

- used rhubarb-lip-sync to know what shape to do(based on exported file)

- used shape keys to match shapes of mouth

- some quick lipsync file assetmanager loader

- some easy code that follow sayAudio.getPlaybackTime() and transform between shapes.

- done

More improvements of my prototype, added dive, discrete jump heights, bomb thrower including explosion. Amazing how simple and even painless it was. Except controller fine tuning, that was/is a bit a pain.

Not related to JMonkey, but I’m working on a 3d software renderer, to learn more about graphics.

Using pure Java and AWT.

Next comes texturing, z buffering, clipping, lighting, etc.

I’ll move to quaternions as soon as I understand them…

This will probably never happen. You can use them without understanding them.

In fact, when someone tells me they totally understand quaternions, I have an imaginary narrator in my head saying “They badly misunderstand quaternions.”

To understand how quaternions represent 3-D rotations, I think it helps to first understand how complex numbers represent 2-D rotations. If that’s not a familiar concept, study complex numbers first.

Here’s a concise summary of quaternions that I found helpful when I was learning: https://www.geometrictools.com/Documentation/Quaternions.pdf

Haha, at least I realize that I’m clueless.

Thanks, that’ll come in useful. I’ve started with this one: Understanding Quaternions | 3D Game Engine Programming

Which does go over some complex number math

This was more of an experiment, but might be interesting to some of you here. While watching the clouds outside, I had a couple times thought that they look kinda like a heightmap. So the last time it happened, I took a picture of them and today I imported it as a terrain heightmap.

The first thing I tried was just changing it to grayscale and imported. Didn’t look the best; while the mountain where the central cloud was in the picture looks okay, everything else is waay to jagged.

Obviously, my next idea was to adjust the levels a bit, but that didn’t help much, ended up having to apply quite some blur to get at least half decent results. A lot of jaggedness is still there, but a lot of detail was lost in the process.

So, overall, is this a decent way to make terrain easily? I’d say no, the results aren’t the best. But it was fun trying it

I wonder if using the unfiltered one to lightly modulate the blurred one would be interesting. (Edit: I think this could still be done at the image manipulation stage.)

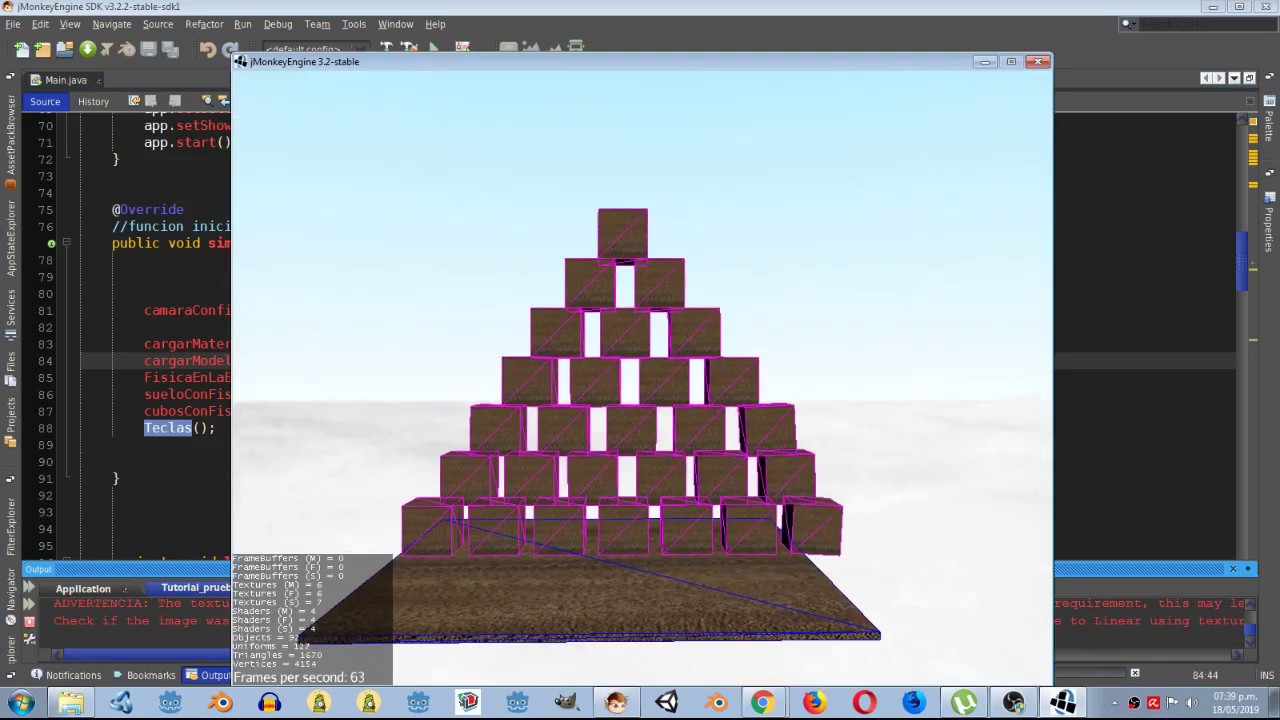

In progress…

First thought: Wow, those procedural clouds look amaz-- ah, that’s a photo. (At least I had faith in your ability, right?)

Maybe you’re aware of the sampling data loss, but I’ve seen the same craziness happen when sampling data at a lower resolution than the source image. I think that’s what happening here. Skips over data entirely, causing deltas that are far more drastic than you’re looking at in the photo.

I guess your source image dimensions (assuming it’s just sampling pixels) didn’t match the number of vertices in the ground terrain. Or maybe you don’t have much control over that because of LOD. But if you’re further away, the sampling difference shouldn’t matter as much, so “turning up the LOD to ultra settings” would probably also help, if that’s possible.

Good idea. It would add some detail back, but I’d still have to figure out something to fix the jaggedness.

Both the image and the terrain had 1024 pixels/vertices. And yes, I turned the LOD distance to 9001 because everything looked garbage otherwise.

Not quite sure what correlation would sampling data loss have here, could you elaborate?

I guess you figured already, but I just meant that if you’re sampling a 5000x5000 pixel image but you have far fewer vertices (500x500 or something), you’d be losing data needed to make the terrain changes gradual. But since you’re not doing that, it must be something else.

Maybe you just have too large min/max range (“levels”?). You could try increasing contrast on the source image (and reduce max height) a bit to get some more shades of grey. Looking at it a second time, now I kind of doubt you’ll get a good “mountain” style terrain out of it, without major manipulation.

I’ve done a bunch of heightmap experiments with pre-generated perlin noise before… that experience makes me feel like the whites in the picture are a little too similar to create mountain-like terrain. And the blues especially so. Those areas should be basically flat. I’ve found that if you multiply your values enough, even a fairly “flat” looking heightmap gets crazy.

For example, there’s a lot more variance in the white and grey tones here, which I think would create a softer “hilly” region:

And to compare with real life terrain, I have some GIS software (QGIS) here where I grabbed this sample from. Pretty big differences in brightness (Swiss Alps):

A bit high altitiude to work here though.