Limiting things to 60 FPS regardless of monitor refresh is one approach but then you never take advantage of the higher refresh for smoother animations.

GameLoop is not the only way to drive a GameSystemManager but I only used these as examples anyway.

Mythruna uses these for the backend which means that the network events will be quantized to 60 FPS (at best). But because everything is happening on different threads (like the server), the client cannot just march along in lock step… as there is no such thing as lock step. The visualization is always interpolating between two frames of reference, ideally the very most recent and the one just before that. (It renders at a 100ms to 200ms delay as described in the networking documents linked a bunch of times before.) ANY time you decouple movement “frames” from the rendering frames you need to do some kind of interpolation or you will have jitter as the different frame sources sync and randomly unsync.

The above is only to explain how I do it and not to suggest that is is the best way for you. This is why I may not have the same issues because turning a long value between two long values into a float ‘time’ is going to be a lot more accurate than accumulating a float tpf.

I do not know the best way to get JME animation converted to accumulate with double. Code-wise, it’s a shame because a lot of it is double-based but is forced into float accumulation because of Control.update(). To avoid this, I think you’d have to supplant the existing update and accumulate your own double-based time, taking care to wrap it for the particular animation, etc…

I’m quickly looking through my code just to see if there is anything interesting. The MOSS libraries (unreleased Mythruna Open Source Software) has a character rig package that it uses to manage a rigged character. So every one of my network driven animated characters is really managed by a character rig.

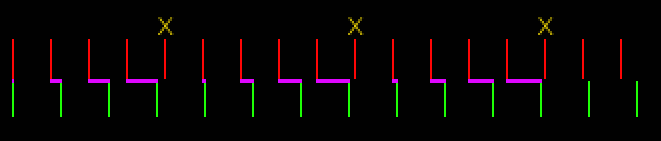

The first thing the character rig does is kill JME’s normal animation:

public AnimComposerRig( AnimComposer anim ) {

this.anim = anim;

this.originalSpeed = anim.getGlobalSpeed();

anim.setGlobalSpeed(0);

}

…setting global speed to 0 means that the regular update() will never update anything.

After that, I always provide time externally:

public void setTime( String layerId, double time ) {

if( layerId == null ) {

layerId = AnimComposer.DEFAULT_LAYER;

}

AnimLayer layer = anim.getLayer(layerId);

if( layer.getCurrentAction() != null ) {

layer.setTime(time);

} else {

log.warn("No current action for layer:" + layerId);

}

}

Thankfully, JME’s AnimLayer is already double-based for setTime():

…I think that’s the Lemur tween influence.

So in theory, you could do something similar in your own code. Have an app state that wraps all of your AnimComposers in some driver object. The app state could keep track of long nanoTime() from some start value and convert it to double every frame: (current - start) / NANOS_PER_SECOND

…then pass that to all of the wrappers.