So I have been trying quite a few different methods over the last week or more on how to actually accomplish 2D shadow and lighting in JME - for those that are unsure of what I mean, I am talking about this:

I want to do this on the GUI node because it functions how I would expect a 2D game to function (x and y are based on the resolution, and z is basically a z-order). I already know about parallel projection for the camera so it faces directly down onto a 3D scene, but im effectively trying to draw a 2D scene in a 3D scene. it doesn’t look right and I really don’t want to go down that route. I very much prefer using the GUI node and I would appreciate it if this discussion did not involve doing it any other way. I appreciate that doing it in the GUI node means i’ll have to “roll my own” lighting and shadows - but I knew that anyway. The jme lights and shadows are for 3D environments, not 2D.

I’ll list my point of references first, just so it’s easier to understand my thought processes:

- 2D Pixel Perfect Shadows · mattdesl/lwjgl-basics Wiki · GitHub

- My technique for the shader-based dynamic 2D shadows | Catalin ZZ

- http://wonderfl.net/c/foPH/read

So this is the process as far as I am aware to produce this result:

- Create an “occlusion map” - create a black and white texture that displays all items that cast a shadow in black, and the rest in white.

http://puu.sh/g8ed0/fa4d763b82.png

In the image above, the occlusion map shows that the black area will cast a shadow, and the white area will not.

- Create a “distance map” - create a black and white texture that represents the distance of these object from the center of the image (the light origin).

http://puu.sh/g8en1/27ad285209.png

The image above isn’t quite right - I’ve been tampering with the code…

- Create a “reduced” distance map - create a 1D texture that uses recangular polar conversion to determine the distance based on the shade of grey.

http://puu.sh/g8gnL/d18c4319e7.png

I managed to get this somewhat working using the CPU. It’s slow as hell and I would really like to get this thing on the GPU to speed things up.

I managed to get a fragment shader working that does the last step (polar conversion) to display the lighting somewhat - although it’s still buggy.

uniform sampler2D m_reducedMap;

uniform vec2 resolution;

uniform vec2 lightPosition;

varying vec2 texCoord;

//sample from the 1D distance map

float sample(vec2 coord, float r)

{

return step(r, texture2D(m_reducedMap, coord).r);

}

vec4 processReducedMap()

{

float PI = 3.1415927;

//rectangular to polar

vec2 norm = texCoord.st * 2.0 - 1.0;

float theta = atan(norm.y, norm.x);

float r = length(norm);

float coord = (theta + PI) / ( 2.0 * PI);

//the tex coord to sample our 1D lookup texture

//always 0.0 on y axis

vec2 tc = vec2(0.0, coord);

//the center tex coord, which gives us hard shadows

float center = sample(tc, r);

//we multiply the blur amount by our distance from center

//this leads to more blurriness as the shadow "fades away"

float blur = (1.0 / resolution.y) * smoothstep(0.0, 1.0, r);

//now we use a simple gaussian blur

float sum = 0.0;

sum += sample(vec2(tc.x - 4.0*blur, tc.y), r) * 0.05;

sum += sample(vec2(tc.x - 3.0*blur, tc.y), r) * 0.09;

sum += sample(vec2(tc.x - 2.0*blur, tc.y), r) * 0.12;

sum += sample(vec2(tc.x - 1.0*blur, tc.y), r) * 0.15;

sum += center * 0.16;

sum += sample(vec2(tc.x + 1.0*blur, tc.y), r) * 0.15;

sum += sample(vec2(tc.x + 2.0*blur, tc.y), r) * 0.12;

sum += sample(vec2(tc.x + 3.0*blur, tc.y), r) * 0.09;

sum += sample(vec2(tc.x + 4.0*blur, tc.y), r) * 0.05;

// gl_FragColor = vColor * vec4(vec3(1.0), sum * smoothstep(1.0, 0.0, r));

vec4 vColor = vec4(1, 1, 1, 1);

return vColor * vec4(vec3(1.0), sum * smoothstep(1.0, 0.0, r));

}

void main()

{

vec4 color = processReducedMap();

gl_FragColor = color;

}

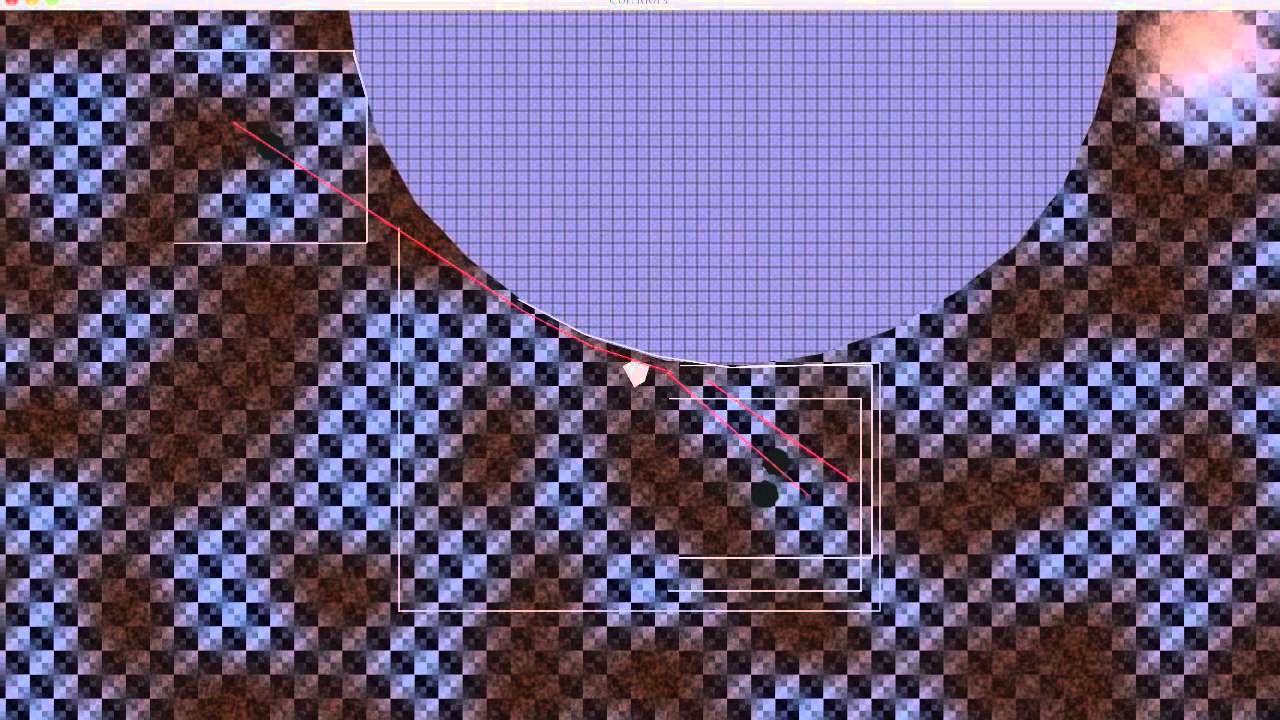

The fruits of my labour are somewhat frustrating - and not really working in my favour:

http://puu.sh/g8feQ/d059e73b36.png

My process involves creating each texture on the CPU - looping over X and Y to create an occlusion map, then using that map to create a distance map, then using the distance map to create a reduced map, then finally passing the reduced map to the shader.

I do this by creating an com.jme3.texture.Image and an com.jme3.texture.image.ImageRaster to modify the pixels. As is expected, continually looping 480,000 times per frame is not cool.

I have been reading about framebuffers - and have seen one or two examples of how to do this in JME - but if I were to do this using shaders alone, I would need to get the output of the framebuffer 3 times in one frame - which I don’t think is possible.

Does anyone have any ideas on how best to approach this?

It seems to fulfull all my requirements and best of all it’s all on the GPU.

It seems to fulfull all my requirements and best of all it’s all on the GPU.