sigh Here we go again…

Short version:

How does one get the normal vector of the triangle being rendered in the frag shader of a multi-polygon mesh?

List of stuff I’ve tried (in the vert shader, vNormal is a varying passed to the frag shader):

- vNormal = normalize(g_NormalMatrix * inNormal); [as seen in Lighting.vert]

- vNormal = normalize((g_WorldMatrix * vec4(0.0, 0.0, 1.0, 0.0)).xyz); [works for individual quads]

Long version

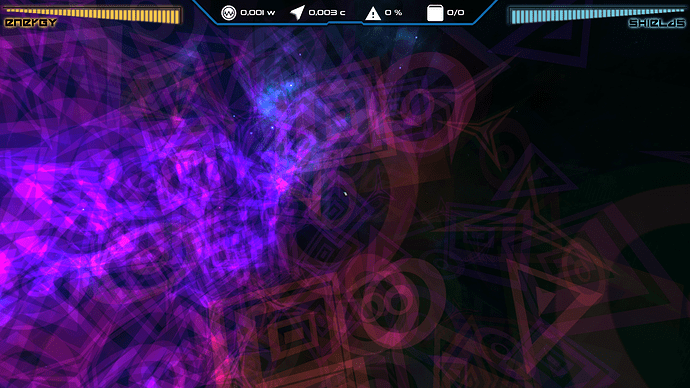

So what I have is a bunch of quads that are randomly placed in space with the same material.

Their parent node is then passed to the GeometryBatchFactory.optimize() which reduces it to a geometry that I reattach to another node to get rid of the leftover junk geometries.

Now the mesh itself renders correctly (after I reapply some parameters to it). However the shader that used to work for individual quads now doesn’t do it’s job right anymore.

What it’s supposed to do is to scale the transparency of the quads depending on the dot product with the camera view vector like so:

color.a = abs(dot(viewDir, vNormal)) - 0.2;

And the viewDir and vNormal are obtained in the vert shader as you can see here:

vec3 worldPosition = (g_WorldMatrix * vec4(inPosition, 1.0)).xyz;

viewDist = length(g_CameraPosition - worldPosition);

viewDir = normalize(g_CameraPosition - worldPosition).xyz;

vNormal = normalize((g_WorldMatrix * vec4(0.0, 0.0, 1.0, 0.0)).xyz);

Again, this worked for separate non-batched quads.

Any ideas?

P. S.

I tried the solution from here and it doesn’t seem to be working in any meaningful way.

Well this was straightforward.

Well this was straightforward.

:

: