Hello jMonkeys,

while recently reworking one of my shaders i came to the conclusion its time to redo my lighting approach since it looks strange at night (i’ll explain soon)

Similar to games like minecraft i floodfill the light and each transparent block as well as light emitting blocks have a light level. They do not only have 1 light level for sun and 1 for artificial light but 4 channels instead, 3 for colored (red green and blue) light and 1 for sunlight.

while meshing i pack that information into a vertex buffer (the neighbours light levels actually) so i can get the light level at each block (at each face that is actually) in the shaders.

calculating the influence of the sun is straightforward, i can send the current sunlight as well as direction as uniforms to the shader, inside the shader use the normal maps and do phong lighting using the provided uniforms taking the sunLevel at this face into account.

Since the sunlight is usually quite whitey i can do the multiplication with the color from the texture to dim it or get it at full brightness (for day/night) and multiply it with the sunLevel at this face (for darker inside caves etc). However the colored light, say red for example has no green and blue components so when i place a red lamp on a green grass block it does not get lit. (multiplying red with green results in black)

On the other hand, when i add that colored light instead of multiplying it, at night it looks really ugly since the surface loses all its texture (the sunlight is low strength, thus the texture color is close to black after multiplying and then red light is added results in quite plain red faces with no textures)

so i decided to treat all colored light just as if it was right infront of the face (0,0,1) and then use the normals from the normal map varying around (0,0,1) to calculate dot products and thus vary the amount of colored light to apply over the face. it looks ok but im curious about other approaches and also the falloff of the light looks stange, in that all strongly lit faces are quite smililarly lit while the less light a face receives the faster it gets dark.

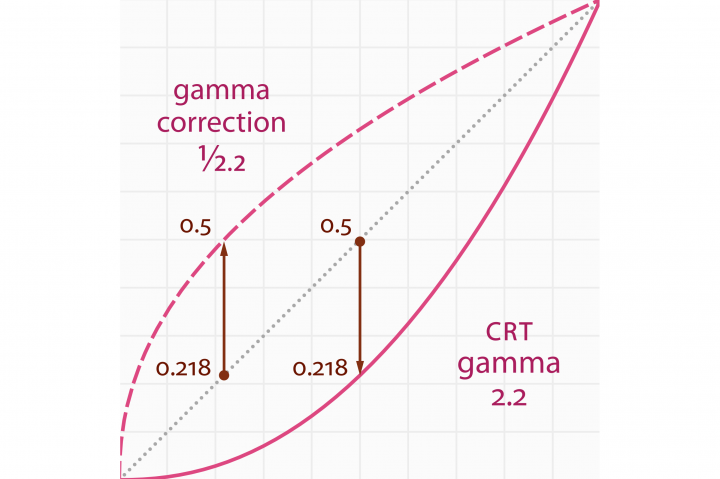

so i read about how monitors show colors and from what i get it turns out when i read a value from a texture i need to read it like

vec4 color = pow(texture(m_Tex, texCoords), vec4(2.2));

then do my calculations, i can use my linear falloff light values from the floodfill and later output my color like

outColor = pow(calculatedColor, vec4(1.0/2.2));

but when i enable gammaCorrection i can just output my color like

outColor = calculatedColor;

because thats what the gammaCorrection setting is for?

i would like to provide the user a setting so they can specify their own gamma in some reasonable range, how would that interfere with say Lemur GUI because i expect it to mean i have to turn gammaCorrection off and do it manually with the users specified value

so 2 questions: is treating the colored light the way i do reasonable (with assuming it is right infront of the face so i can dot it with the per-fragment-normal)? are there better approaches?

and question 2: where does gammaCorrection take place and how should i treat the colors correctly inside the shaders when doing calculations?

Fragment Shader Code (yupp, its a mess, so i tried to put some comments)

its the main function only, since the rest is not needed for the logic

void main() {

const vec2 vec01 = vec2(0.0, 1.0);

vec3 finalTexCoord = texCoord;

#ifdef PARALLAX

finalTexCoord = smartSteepParallaxMapping(texCoord);

#endif

vec4 texColor = texture(m_TextureArray, finalTexCoord);

#ifdef USE_DISCARD_CHECK

if (((1.0 - step(0.9999, useTransparency)) * 2.0 - 1.0) * (1.0 - texColor.a) < -0.0001) {

discard;

}

#endif

//RAW COLORS

vec3 colorTex = texColor.rgb; //this is the original color as looked up in the texture (which assumes no colored light and full white sunlight)

vec3 colorSun = m_SunColor.rgb * colorRGBS.aaa; //this is the suncolor provided by material parameter scaled by sun strength at this block

vec3 colorLight = colorRGBS.rgb; //this is the colored light at this block

//CALCULATE FACTORS

vec3 tangentNormal = vec01.xxy; //default normal ponting away form the face

#ifdef NORMAL

tangentNormal = normalize((texture(m_NormalArray, finalTexCoord).rgb) * 2.0 - 1.0); //per fragment normal as lookedup in the texture

#endif

vec3 worldNormal = normalize((g_WorldMatrix * vec4(TBN * tangentNormal, 0.0)).xyz); //transfers normal from tangent to worldspace using TBN from vertex shader

//float diffFactorRaw = max(dot(worldNormal, -m_SunDirection), 0.0); //Phong

float diffFactorRaw = pow(dot(worldNormal, -m_SunDirection) * 0.5 + 0.5, 2.0); //Phong Adjusted

float specFactorRaw = 0.0;

vec3 specColor = vec3(0.0, 0.0, 0.0);

#ifdef SPECULAR

//specFactorRaw = max(dot(normalize(viewDir), normalize(reflect(m_SunDirection, worldNormal))), 0.0); //Phong

specFactorRaw = max(dot(worldNormal, normalize(-m_SunDirection + normalize(viewDir))), 0.0); //Blinn-Phong

specColor = texture(m_SpecularArray, finalTexCoord).rgb;

#endif

float specStrength = (specColor.r + specColor.g + specColor.b) / 3.0;

float ambFactor = 0.15; //define ambient factor

float diffFactor = 1.0 - ((1.0 - diffFactorRaw) * SUN_FACTOR); //scale the diffFactor by matParam SunFactor

float specFactor = step(0.250000, diffFactorRaw) //in case diffuse was lower than 90 degrees

* specStrength // lookup specular component from texture

* pow(specFactorRaw, 32.0); // and scale it by the specular factor raised to a power

float ssaoFactor = 1.0 - (ssao * SSAO_FACTOR); //scale ssao from vertex shader by provided matParam

//CALCULATE COLOR COMPONENTS

vec3 baseWorldNormal = worldNormal; //baseWorldNormal will be used for light that has no direction, its assumed to be right infront

#ifdef NORMAL

baseWorldNormal = normalize((g_WorldMatrix * vec4(TBN * vec01.xxy, 0.0)).xyz); //if normal is enabled, assume its pointing straight away from the face, which results in diffent dot products all over the face

#endif

float diffRGBFactor = dot(baseWorldNormal, worldNormal) * 0.5 + 0.5; //not sure its a good approach

vec3 diffuseColor = diffFactor * colorSun * colorTex;

vec3 ambientColor = ambFactor * colorTex;

vec3 diffuseRGBColor = diffRGBFactor * colorLight;

vec3 specularColor = specFactor * specColor;//((vec01.yyy + colorTex + colorSun) / 3.0);//colorTex * colorSun;

//PUT IT ALL TOGETHER SOMEHOW

color = vec4((diffuseColor + ambientColor + diffuseRGBColor + specularColor) * ssaoFactor, 1.0);

}

thanks for any input and many greetings from the shire,

samwise